Data Scientist? Programmer? Are They Mutually Exclusive?

Ann Spencer2018-04-12 | 7 min read

This Domino Data Science Field Note blog post provides highlights of Hadley Wickham’s ACM Chicago talk, “You Can’t Do Data Science in a GUI”. In his talk, Wickham advocates that, unlike a GUI, using code provides reproducibility, data provenance, and the ability to track changes so that data scientists have the ability to see how the data analysis has evolved. As the creator of ggplot2, it is not a surprise that Wickham also advocates the use of visualizations and models together to help data scientists find the real signals within their data. This blog post also provides clips from the original video and follows the Creative Commons license affiliated with the original video recording.

Data Science: Value in the Iterative Process

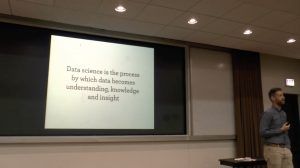

Hadley Wickham, Chief Scientist at RStudio, presented an ACM Chicago talk, “You Can’t Do Data Science in a GUI”, that covered data science work flows and tools. For example, Wickham advocates the use of visualizations and models to support data scientists find the real signals within their data. He also suggests leveraging a programming language for benefits that include reproducibility, data provenance, and the ability to see how data analysis has evolved over time. He also touches upon what he favors about the R programming language. From his perspective, using a GUI does not allow for these benefits. Benefits that are particularly important as he defines data science, according to the slide from this talk, as “the process by which data becomes understanding, knowledge, and insight”. GUIs deliberately obfuscate the process as you can only do what the GUI inventors want you to do.

Visualizations and Models

Before diving into answering the question, “why program?” portion of his talk, Wickham discusses two “main engines” that help data scientists understand what is going on within a data set: visualization and models. Visualization is a tool that enables a data scientist to iterate on a “vague question” and help them refine it. Or taking the question and “trying to make it sufficiently precise so that you can answer it quantitatively.” Wickham also advocates the use of visualization because it may surprise a data scientist or lead them to see something they didn’t expect to see. During the talk, Wickham indicates “that the first visualization you look at will always reveal a data quality error, and if it doesn’t not reveal a data quality error, that just mans you haven’t found one yet.” Yet, he also indicates that visualizations do not scale particularly well and suggests using models to complement visualizations. Models are used when data scientists are able to make their questions precise enough to use an algorithm or summary statistic. Models are more scalable than visualizations because “it is way easier to throw more computers at the problem then it is to throw more brains at it.”

Why Program? Reproducibility, Provenance, and Tracking Changes

Wickham advocates using a programming language, rather than a GUI, to do data science as it provides the opportunity to reproduce work, understand the data provenance (which is also linked to reproducibility), and the ability to see how the data analysis has evolved over time. Two workflows that Wickham points out, in particular, that are useful include cut-and-paste and using stack overflow. While these tools were mentioned in humor, code is text and it is easy to cut-and-paste text, including error messages into stack overflow to find a solution. Also, understanding data provenance enables reproducibility because it enables the data scientist to “rerun that code with a new data later on, and get updated results you can use”. Working with code also enables data scientists share their work via open ways such as GitHub. In this portion of his talk, Wickham references a project on GitHub where people can see the series of commits, drill down on the commits, and see “where data analysis is now but you can see how it evolved over time.” He contrasts this with Excel which provides the opportunity for people to accidentally randomize their data without knowing the provenance or having a rollback option.

Why Program in R? Despite the Quirks? Packages.

As Wickham is the Chief Scientist at RStudio, he indicates while he thinks “Python is great too”, he “particularly loves R”. A few of R features that appeal to Wickham include

- It is a functional programming language which allows you to “solve problems by composing functions in various ways”

- how R provides the ability to look at the structure of the code

- how R “gives us this incredible power to create little domain specific languages that are tailored to certain parts of data science process”.

This also leads to the potential to build R packages. Wickham indicates that packages allow people to solve complex problems by breaking them down into smaller pieces, smaller pieces that can be taken from one project and then “recombined in different ways” (reproducibility), to solve a new problem.

Conclusion

As Wickham defines data science as “the process by which data becomes understanding, knowledge, and insight”, he advocates using data science tools where value is gained from iteration, surprise, reproducibility, and scalability. In particular, he argues that being a data scientist and being programmer are not mutually exclusive and that using a programming language helps data scientists towards understanding the real signal within their data. While this blog post only covers some of the key highlights from Wickham’s ACM Chicago talk, the full video is available for viewing.

Domino Data Science Field Notes provide highlights of data science research, trends, techniques, and more, that support data scientists and data science leaders accelerate their work or careers. If you are interested in your data science work being covered in this blog series, please send us an email at writeforus(at)dominodatalab(dot)com.

Ann Spencer is the former Head of Content for Domino where she provided a high degree of value, density, and analytical rigor that sparks respectful candid public discourse from multiple perspectives, discourse that’s anchored in the intention of helping accelerate data science work. Previously, she was the data editor at O’Reilly, focusing on data science and data engineering.

Subscribe to the Domino Newsletter

Receive data science tips and tutorials from leading Data Science leaders, right to your inbox.

By submitting this form you agree to receive communications from Domino related to products and services in accordance with Domino's privacy policy and may opt-out at anytime.